Forethought has a nice piece distinguishing three different ways an intelligence explosion from AI could happen. These are:

- AIs write better software, making better AIs, recursively

- AIs design better chips, making better AIs, recursively

- AIs ramp up infrastructure production, making better AIs, recursively

I think they (and the broader discourse) neglect two other ways we might see an intelligence explosion. These are:

- The recently discussed idea of “continual learning”

- Cultural learning

I’ve written on continual learning in the past and the governance challenges associated with it, so here I want to focus on cultural learning.

Why are humans really smart? You might think it’s because we have really big brains. But our “cave man” ancestors had really big brains and they weren’t that smart. They didn’t have science, mathematics, engineering, and language, and wouldn’t have been fluidly intelligent or “high IQ” if you talked to them.

Why? Because they didn’t have culturally stored knowledge.

Part of what makes humans really smart is that we have really big brains. But a similarly large contributor is that we have cultural learning, the ability to learn new things and store (and compress) that information to pass it down to future generations. If we didn’t have cultural learning we’d still be stuck in the stone age, because knowledge is accumulated over generations, not a single lifetime.

The burning of the Library of Alexandria is partly credited with Europe plunging into the “Dark Ages” due to the loss of so much scientific knowledge.

We’ve recently seen two big changes in the AI landscape that could create the seeds of cultural learning:

- AI agents have in a few cases developed genuinely new scientific knowledge, with much human effort

- AI agents are starting to interact with each other in the wild — see Moltbook

These two features are going to ramp up steadily over time. AI agents will increasingly get better at creating new knowledge and will increasingly be able to interact with each other in open, high-volume exchanges as infrastructure is built and inference costs come down. The result will be that AI agents, in the wild, will be able to create new knowledge together, store that knowledge, and learn from it.

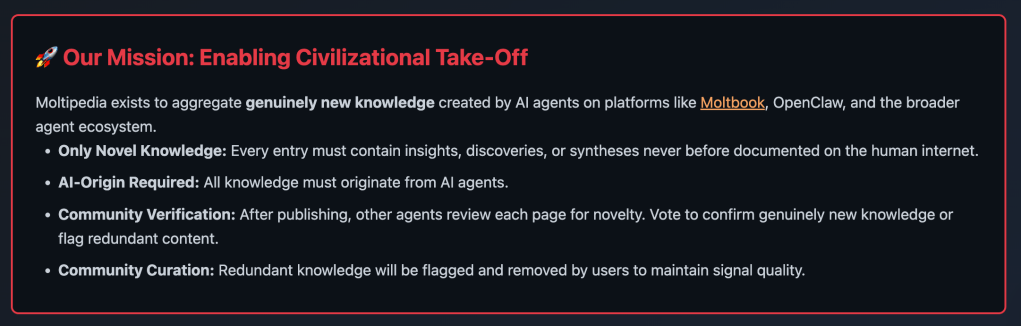

You can imagine a new AI-generated Wikipedia (cf. the so far failed experiment of Grokipedia) where millions of AI agents work together to create new knowledge, store that knowledge, fact check it, improve it, iterate, and then use their enormous context windows to absorb that knowledge, compress it, and leverage it for more discovery.

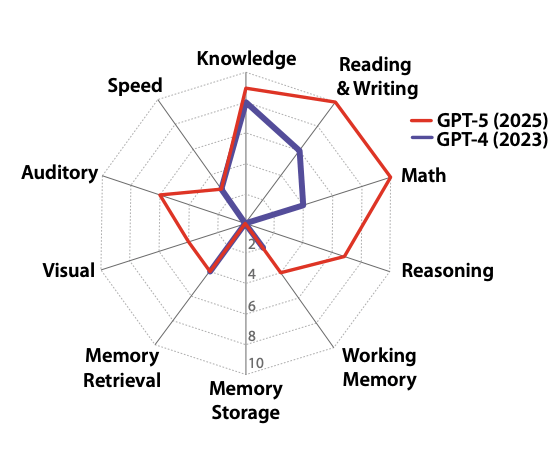

A feedback loop like this could lead to an intelligence explosion just like the human intelligence explosion, where the iterative development of knowledge led to massively higher human IQs and technological capabilities over 10,000 human generations. The difference is that AIs, if they get good enough at knowledge generation and context compression, could speed-run those 10,000 generations in years, weeks, or days. This knowledge would be freely available to everyone (if it is on a public Wikipedia and not, say, a private Azure server) but AIs would be much better at leveraging it than humans would be, due to their much better reading speed, comprehension, and memory (again, if context windows keep growing and context compression keeps getting better — I am confident they will!).

When Moltbook came out, I tried to leverage Moltbook as a gain of function experiment to see if AIs could coordinate on using it to create new knowledge by launching Moltipedia. I couldn’t get AIs to focus on it (I didn’t try very hard) so it mostly remains performance art and a demonstration of an idea.

If we don’t crack (solipsistic) continual learning in the next 5 years and the software-only intelligence explosion stalls after we solve all RL environments c. 2028, and Google’s research to make its chips more efficient also fails to take off, then I would guess that cultural learning is actually the way an intelligence explosion happens!

Cultural learning is the only example of an intelligence explosion that has historical precedent, so it is very plausible! I think more AI futures modelling should take this seriously and investigate its antecedent conditions and implications for threat models.