Anthropic’s RSP Version 3 Appendix has commitments related to competitors. They intend for these commitments to help them escape a collective action problem, saying that “commitments like this may help avoid an inadvertent “race to the bottom”.” The whole point of these principles, as I understand them, is for them to be such that if all labs followed them we’d be in a good situation.

Unfortunately, as Oliver Habryka helped me notice, they don’t work.

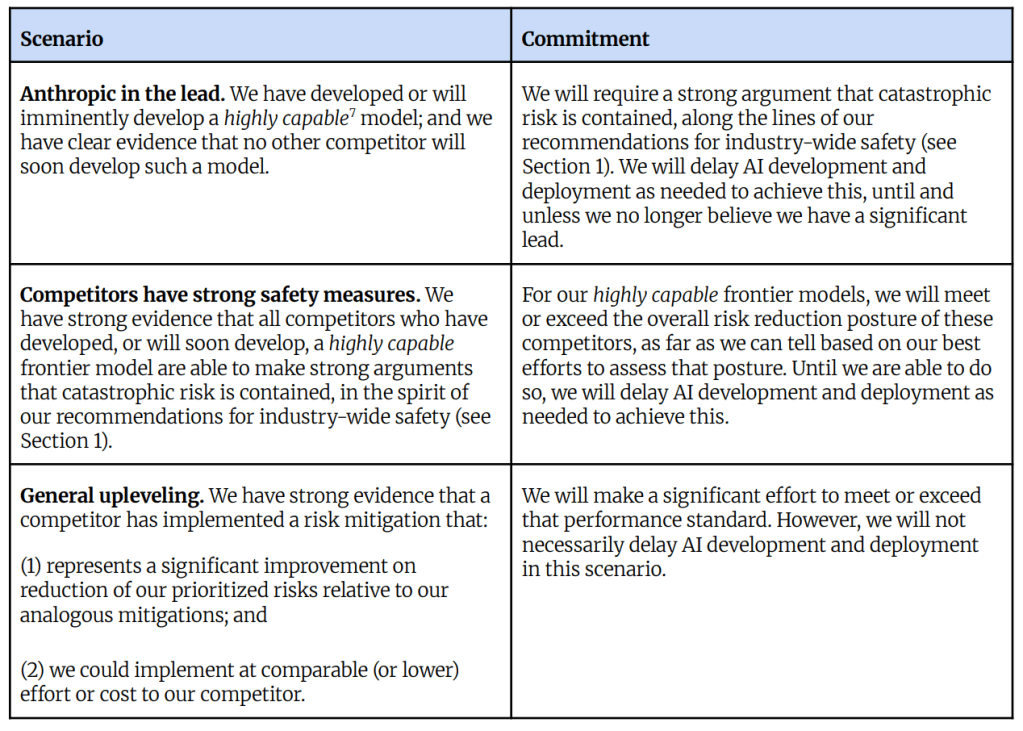

The RSP makes two main commitments:

- If Anthropic is in the lead they’ll pause as long as needed before making a “Highly Capable Model”, i.e. a very dangerous model somewhat covering the idea of superintelligence and also an intelligence explosion

- If Anthropic’s competitors are developing a “Highly Capable Model” and can do so safely, they’ll pause until they meet the same bar

The second commitment works well. It means that if other companies are releasing a safe superintelligence / intelligence explosion Anthropic won’t mess it up by releasing an unsafe version. If all companies followed this, then once a company was about to release a safe superintelligence, the other companies would stop until they could at least meet that company’s standards.

This addresses a collective action problem where one company might be about to release superintelligence, leading other companies to race and release an unsafe superintelligence before the lead company can release safe superintelligence. In a world where the big three AI companies are all roughly at the same capability level, but one of the labs is much safer than the others, everyone following this commitment could avoid a dangerous race to the bottom. If widely followed it also essentially means that if several companies were about to develop superintelligence, they’d stop and let only the safest company develop it.

Unfortunately, the first commitment does not lead to good incentives. As Habryka points out, it is a commitment to race.

The ideal outcome — from my perspective but I think also from Anthropic’s — would be one where if all of the other top labs paused, Anthropic would also pause. I think a conditional commitment should reflect this. But Anthropic’s first commitment says that if all of the other labs paused, Anthropic would still race ahead until they had a significant lead, and then they would pause.

The commitment specifically reads:

If We have developed or will imminently develop a highly capable model; and we have clear evidence that no other competitor will soon develop such a model.

Then We will require a strong argument that catastrophic risk is contained, along the lines of our recommendations for industry-wide safety (see Section 1). We will delay AI development and deployment as needed to achieve this, until and unless we no longer believe we have a significant lead.

The last clause of the commitment is the kicker. Without it, the commitment’s sentential logic would say that if Anthropic was about to develop superintelligence, but other companies refused to develop superintelligence, Anthropic would respect that pause until they were pretty sure their development process was safe. But with that clause, it says that if Anthropic was about to develop superintelligence, and other companies refused to develop superintelligence, they would race ahead and develop superintelligence anyway, until they were comfortable enough in their lead.

If you want to have a set of commitments that gets you out of a collective action problem, or a race to the bottom, you need these commitments to be such that if everyone followed them you would avoid the collective action problem and be in the world you want to be in. But if everyone followed this first principle, everyone would keep racing until they were sure they had enough lead time to work on safety while still being far out in front.

The commitment is strictly incompatible with a pause until we are sure superintelligence is safe.

If Anthropic wants to instead make conditional commitments that get them out of a collective action problem instead of encourage it, I think they need to do to two things:

- First, drop the clause about lead time. Simply say “if we have developed or will imminently develop a highly capable model, and we have clear evidence that no other competitor will soon develop such a model, then we will require a strong argument that catastrophic risk is contained, along the lines of our recommendations for industry-wide safety, delaying AI development and deployment as needed to achieve this.” More modestly, change the clause to say that you will pause until other companies are going to get ahead of Anthropic, to guarantee Anthropic doesn’t fall behind, rather than making it about guaranteeing Anthropic stays way out in front. This is at least compatible with a pause rather than a commitment not to pause even if your competitors do.

- Second, strengthen the commitment by saying that Anthropic will pause if other companies adopt the same conditional commitments.

These two changes would result in a commitment that genuinely helps resolve the collective action problem rather than making it worse. It would mean that if other companies agreed to the principles, all of them would refuse to develop a Highly Capable Model until Anthropic’s recommendations for industry were followed. Instead, the sentential logic of the current commitment is such that if all AI companies followed it they would simply race.

At the very least, something Anthropic can do that is robust to economic disincentives, investor pressures, etc., is to add the words “at least” to the final clause of their first commitment, so that it reads: “We will delay AI development and deployment as needed to achieve this, at least until and unless we no longer believe we have a significant lead.” This would at least allow for the possibility of a pause rather than binding them to the mast by requiring them to race.

Commitments below, and in Appendix A here.

Leave a comment